Member-only story

ChatGPT — an epochal event

A quiet shift has been in progress over the last few years in the NLP ecosystem driven by large language models(LLMs). This culminated in an epochal event, just a few days ago with implications far beyond just the NLP ecosystem.

OpenAI released ChatGPT — a large language model trained to capture user intent almost all the time in its responses. A key factor contributing to the success of ChatGPT is the training process, which is the culmination of years of research in reinforcement learning and language modeling. The combination of language modeling followed by supervised tuning and reinforcement learning yielded a model whose responses are hard to distinguish from a human, even when it occasionally “hallucinates” (a euphemism coined by researchers for false statements), or at times stubbornly justifies (e.g. asserting 3599 is a prime number despite acknowledging that is composite 3599 = 59 * 61) when it is wrong, etc.

OpenAI published a blog on ChatGPT. A paper is not out yet, so there has been a flurry of activity online to glean more details about this model. Some of the communities’ guesses/observations are likely to be revised or even proven wrong when the paper is published. For this reason, any guesses/estimates will be explicitly stated below to distinguish them from known facts.

ChatGPT under the hood — a guess

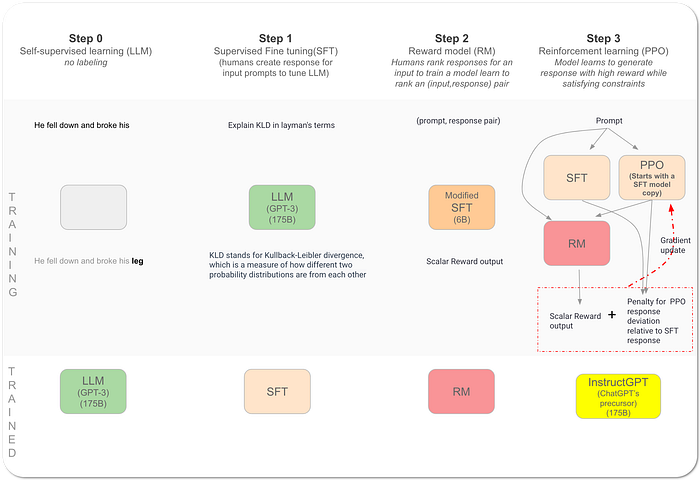

Here is a summary synthesized from what we know so far about the steps to create InstructGPT, a precursor model to ChatGPT. Given the training process for InstructGPT is almost identical in the published illustrations to the ChatGPT blog post figure, the steps below describe the InstructGPT training procedure as an approximate proxy for ChatGPT training.

- Step 0. ChatGPT’s performance corroborates the fact, a Large Language model (LLM) alone (particularly autoregressive models that learn by predicting the next word, given prior words) is insufficient for the use case of human interaction with a model. All the open-source models — PubmedGPT (released yesterday), Galactica, BLOOM, OPT, GPT-Neox, etc. are evidence of the fact that it is not easy, with just prompt engineering…