Member-only story

Trends in input representation for state-of-art NLP models (2019)

The most natural/intuitive way to represent words when they are input to a language model (or any NLP task model) is to just represent words as they are — as a single unit.

For example, if we are training a language model on a corpus, we would traditionally represent each word as a vector and have the model learn word embeddings — values for each dimension of that vector. Then subsequently, at test time, if we are given a new sentence, the language model can compute how likely that sentence is using those learnt word embeddings. However, when we represent words as single units there is a possibility that we may come across a word at test time, that we never saw at training time, forcing us to treat it as an out-of-vocabulary word (OOV), and impacting model performance. The OOV problem is there for any NLP model that represents inputs as words.

One solution to address this problem is treat input as individual characters. This approach has shown to yield good results for many NLP tasks despite the additional computation and memory requirements character level input processing introduces (we have train long sequences at character level and backpropagate gradients through time across long sequences too). Even more importantly, a recent comparison (2018) of character based language model has shown character based language models do not perform as well as word based language models for large corpora.

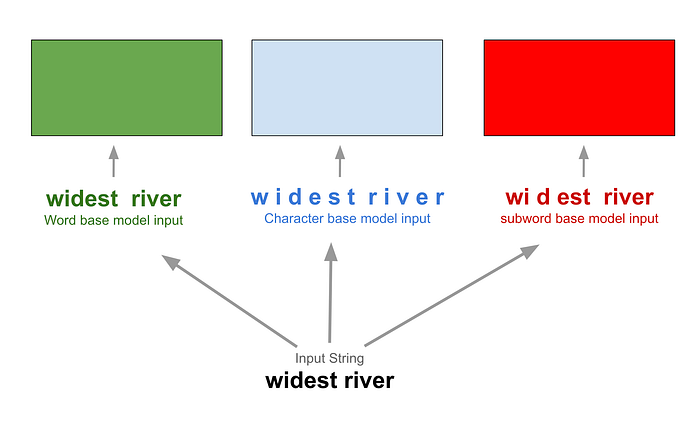

The current trend to address this is a middle ground approach — subword representation of words that is a balance between the advantage of character based models (avoiding OOV) and word based models (efficiency, performance). Image below shows the way input is fed to these three categories of models.

Examining below two state of art language models, BERT (2018) and GPT-2 (2019) — both of which represent inputs as subwords, but adopt slightly different approaches. The problem of how to represent input is not restricted to language models alone — other models these days adopt subword representations too. We will look at just language models specifically because, of late

- The unsupervised learning from language models have been used for subsequent task specific learning with need for lesser labeled data. BERT model for…